Langfuse 🪢

What is Langfuse? Langfuse is an open source LLM engineering platform that helps teams trace API calls, monitor performance, and debug issues in their AI applications.

追踪 LangChain

Langfuse 跟踪 使用 Langchain 回调与 Langchain 集成(Python, JS)。因此,Langfuse SDK 会自动为您的 Langchain 应用程序的每次运行创建嵌套跟踪。这使您可以记录、分析和调试您的 LangChain 应用程序。

您可以通过(1)构造函数参数或(2)环境变量来配置集成。通过在cloud.langfuse.com注册或自托管Langfuse获取您的Langfuse凭据。

构造函数参数

pip install langfuse

# Initialize Langfuse handler

from langfuse.callback import CallbackHandler

langfuse_handler = CallbackHandler(

secret_key="sk-lf-...",

public_key="pk-lf-...",

host="https://cloud.langfuse.com", # 🇪🇺 EU region

# host="https://us.cloud.langfuse.com", # 🇺🇸 US region

)

# Your Langchain code

# Add Langfuse handler as callback (classic and LCEL)

chain.invoke({"input": "<user_input>"}, config={"callbacks": [langfuse_handler]})

环境变量

LANGFUSE_SECRET_KEY="sk-lf-..."

LANGFUSE_PUBLIC_KEY="pk-lf-..."

# 🇪🇺 EU region

LANGFUSE_HOST="https://cloud.langfuse.com"

# 🇺🇸 US region

# LANGFUSE_HOST="https://us.cloud.langfuse.com"

# Initialize Langfuse handler

from langfuse.callback import CallbackHandler

langfuse_handler = CallbackHandler()

# Your Langchain code

# Add Langfuse handler as callback (classic and LCEL)

chain.invoke({"input": "<user_input>"}, config={"callbacks": [langfuse_handler]})

要了解如何将此集成与其它 Langfuse 功能一起使用,请查看这个端到端的示例。

追踪 LangGraph

这部分演示了Langfuse如何通过LangChain集成帮助您调试、分析和迭代您的LangGraph应用程序。

初始化 Langfuse

注意:您需要至少运行 Python 3.11(GitHub 问题)。

使用Langfuse UI中的项目设置里的API密钥初始化Langfuse客户端,并将它们添加到您的环境中。

%pip install langfuse

%pip install langchain langgraph langchain_openai langchain_community

import os

# get keys for your project from https://cloud.langfuse.com

os.environ["LANGFUSE_PUBLIC_KEY"] = "pk-lf-***"

os.environ["LANGFUSE_SECRET_KEY"] = "sk-lf-***"

os.environ["LANGFUSE_HOST"] = "https://cloud.langfuse.com" # for EU data region

# os.environ["LANGFUSE_HOST"] = "https://us.cloud.langfuse.com" # for US data region

# your openai key

os.environ["OPENAI_API_KEY"] = "***"

使用LangGraph的简单聊天应用

我们将在本节中做什么:

- 在 LangGraph 中构建一个可以回答常见问题的支持聊天机器人

- 使用Langfuse跟踪聊天机器人的输入和输出

我们将从一个基本的聊天机器人开始,并在下一节构建一个更高级的多代理设置,同时介绍关键的 LangGraph 概念。

创建代理

首先,创建一个 StateGraph。一个 StateGraph 对象将我们的聊天机器人结构定义为状态机。我们将添加节点来表示聊天机器人可以调用的LLM和函数,并使用边来指定机器人如何在这些函数之间进行转换。

from typing import Annotated

from langchain_openai import ChatOpenAI

from langchain_core.messages import HumanMessage

from typing_extensions import TypedDict

from langgraph.graph import StateGraph

from langgraph.graph.message import add_messages

class State(TypedDict):

# Messages have the type "list". The `add_messages` function in the annotation defines how this state key should be updated

# (in this case, it appends messages to the list, rather than overwriting them)

messages: Annotated[list, add_messages]

graph_builder = StateGraph(State)

llm = ChatOpenAI(model = "gpt-4o", temperature = 0.2)

# The chatbot node function takes the current State as input and returns an updated messages list. This is the basic pattern for all LangGraph node functions.

def chatbot(state: State):

return {"messages": [llm.invoke(state["messages"])]}

# Add a "chatbot" node. Nodes represent units of work. They are typically regular python functions.

graph_builder.add_node("chatbot", chatbot)

# Add an entry point. This tells our graph where to start its work each time we run it.

graph_builder.set_entry_point("chatbot")

# Set a finish point. This instructs the graph "any time this node is run, you can exit."

graph_builder.set_finish_point("chatbot")

# To be able to run our graph, call "compile()" on the graph builder. This creates a "CompiledGraph" we can use invoke on our state.

graph = graph_builder.compile()

将Langfuse作为回调添加到调用中

现在,我们将添加LangChain的Langfuse回调处理程序来跟踪我们应用程序的步骤:config={"callbacks": [langfuse_handler]}

from langfuse.callback import CallbackHandler

# Initialize Langfuse CallbackHandler for Langchain (tracing)

langfuse_handler = CallbackHandler()

for s in graph.stream({"messages": [HumanMessage(content = "What is Langfuse?")]},

config={"callbacks": [langfuse_handler]}):

print(s)

{'chatbot': {'messages': [AIMessage(content='Langfuse is a tool designed to help developers monitor and observe the performance of their Large Language Model (LLM) applications. It provides detailed insights into how these applications are functioning, allowing for better debugging, optimization, and overall management. Langfuse offers features such as tracking key metrics, visualizing data, and identifying potential issues in real-time, making it easier for developers to maintain and improve their LLM-based solutions.', response_metadata={'token_usage': {'completion_tokens': 86, 'prompt_tokens': 13, 'total_tokens': 99}, 'model_name': 'gpt-4o-2024-05-13', 'system_fingerprint': 'fp_400f27fa1f', 'finish_reason': 'stop', 'logprobs': None}, id='run-9a0c97cb-ccfe-463e-902c-5a5900b796b4-0', usage_metadata={'input_tokens': 13, 'output_tokens': 86, 'total_tokens': 99})]}}

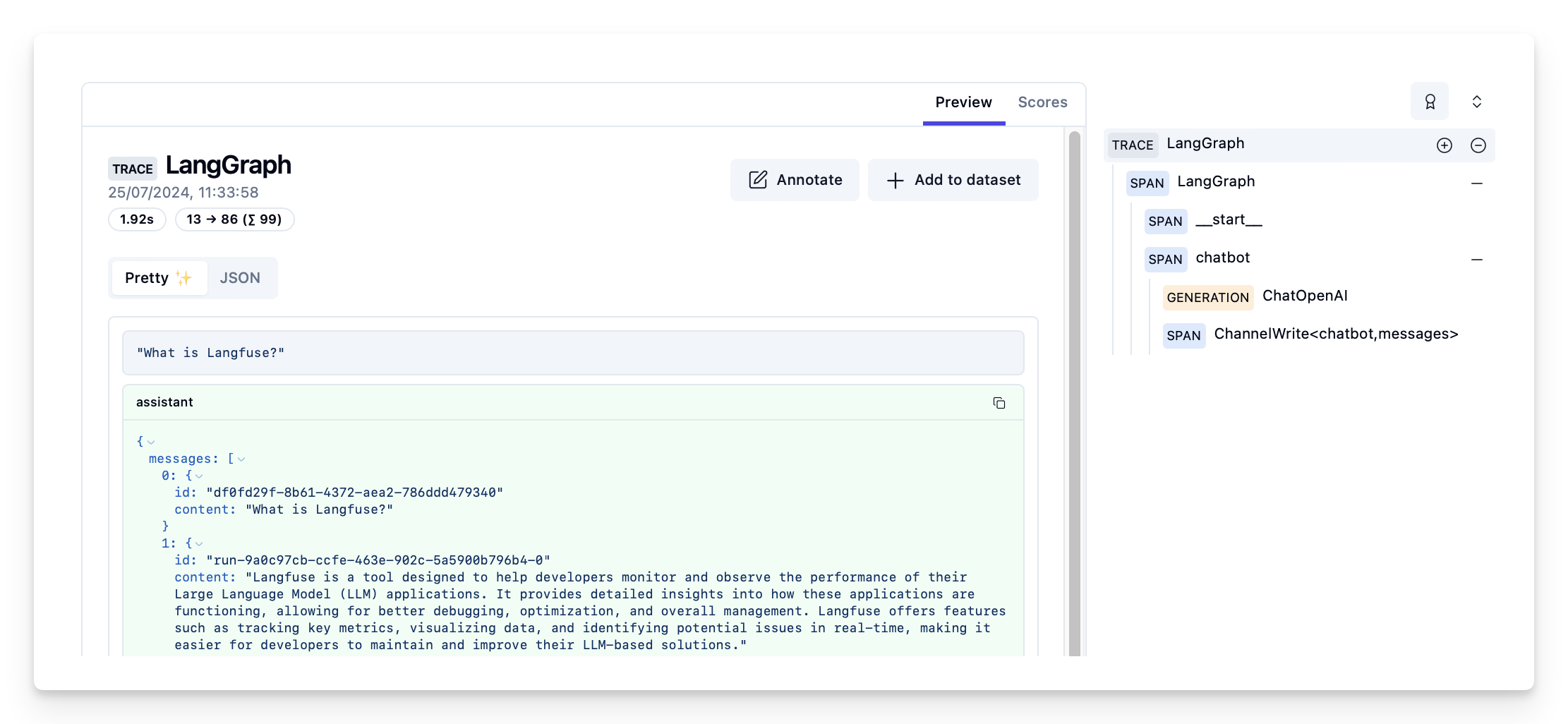

在Langfuse中查看跟踪

Langfuse中的示例跟踪: https://cloud.langfuse.com/project/cloramnkj0002jz088vzn1ja4/traces/d109e148-d188-4d6e-823f-aac0864afbab

- 查看完整笔记本以查看更多示例。

- 要了解如何评估您的LangGraph应用程序的性能,请查看LangGraph评估指南。