使用 OpenTelemetry 进行追踪

LangSmith 可以接收来自基于 OpenTelemetry 的客户端的追踪数据。 本指南将通过示例介绍如何实现这一点。

请在下方请求中,将 LangSmith 的 URL 更新为适用于自托管安装或欧盟地区组织的正确地址。对于欧盟地区,请使用 eu.api.smith.langchain.com。

使用基础 OpenTelemetry 客户端记录追踪信息

第一部分介绍如何使用标准的 OpenTelemetry 客户端将追踪日志记录到 LangSmith。

1. 安装

安装 OpenTelemetry SDK、OpenTelemetry 导出器包以及 OpenAI 包:

pip install openai

pip install opentelemetry-sdk

pip install opentelemetry-exporter-otlp

2. 配置你的环境

为端点设置环境变量,替换为您的具体值:

OTEL_EXPORTER_OTLP_ENDPOINT=https://api.smith.langchain.com/otel

OTEL_EXPORTER_OTLP_HEADERS="x-api-key=<your langsmith api key>"

可选:指定一个不同于“default”的自定义项目名称

OTEL_EXPORTER_OTLP_ENDPOINT=https://api.smith.langchain.com/otel

OTEL_EXPORTER_OTLP_HEADERS="x-api-key=<your langsmith api key>,Langsmith-Project=<project name>"

3. 记录一个跟踪

此代码设置了 OpenTelemetry(OTEL)追踪器和导出器,用于将追踪数据发送至 LangSmith。随后,它调用 OpenAI 并发送所需的 OpenTelemetry 属性。

from openai import OpenAI

from opentelemetry import trace

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.trace.export import (

BatchSpanProcessor,

)

from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter

client = OpenAI(api_key=os.getenv("OPENAI_API_KEY"))

otlp_exporter = OTLPSpanExporter(

timeout=10,

)

trace.set_tracer_provider(TracerProvider())

trace.get_tracer_provider().add_span_processor(

BatchSpanProcessor(otlp_exporter)

)

tracer = trace.get_tracer(__name__)

def call_openai():

model = "gpt-4o-mini"

with tracer.start_as_current_span("call_open_ai") as span:

span.set_attribute("langsmith.span.kind", "LLM")

span.set_attribute("langsmith.metadata.user_id", "user_123")

span.set_attribute("gen_ai.system", "OpenAI")

span.set_attribute("gen_ai.request.model", model)

span.set_attribute("llm.request.type", "chat")

messages = [

{"role": "system", "content": "You are a helpful assistant."},

{

"role": "user",

"content": "Write a haiku about recursion in programming."

}

]

for i, message in enumerate(messages):

span.set_attribute(f"gen_ai.prompt.{i}.content", str(message["content"]))

span.set_attribute(f"gen_ai.prompt.{i}.role", str(message["role"]))

completion = client.chat.completions.create(

model=model,

messages=messages

)

span.set_attribute("gen_ai.response.model", completion.model)

span.set_attribute("gen_ai.completion.0.content", str(completion.choices[0].message.content))

span.set_attribute("gen_ai.completion.0.role", "assistant")

span.set_attribute("gen_ai.usage.prompt_tokens", completion.usage.prompt_tokens)

span.set_attribute("gen_ai.usage.completion_tokens", completion.usage.completion_tokens)

span.set_attribute("gen_ai.usage.total_tokens", completion.usage.total_tokens)

return completion.choices[0].message

if __name__ == "__main__":

call_openai()

您应该能在 LangSmith 仪表板中看到一条类似此示例的追踪记录。

支持的 OpenTelemetry 属性映射

通过 OpenTelemetry 向 LangSmith 发送追踪数据时,以下属性将映射到 LangSmith 的字段。

| OpenTelemetry 属性 | LangSmith 字段 | 备注 |

|---|---|---|

langsmith.trace.name | Run Name | Overrides the span name for the run |

langsmith.span.kind | Run Type | Values: llm, chain, tool, retriever, embedding, prompt, parser |

langsmith.span.id | Run ID | Unique identifier for the span |

langsmith.trace.id | Trace ID | Unique identifier for the trace |

langsmith.span.dotted_order | Dotted Order | Position in the execution tree |

langsmith.span.parent_id | Parent Run ID | ID of the parent span |

langsmith.trace.session_id | Session ID | Session identifier for related traces |

langsmith.trace.session_name | Session Name | Name of the session |

langsmith.span.tags | Tags | Custom tags attached to the span |

gen_ai.system | metadata.ls_provider | The GenAI system (e.g., "openai", "anthropic") |

gen_ai.prompt | inputs | The input prompt sent to the model |

gen_ai.completion | outputs | The output generated by the model |

gen_ai.prompt.{n}.role | inputs.messages[n].role | Role for the nth input message |

gen_ai.prompt.{n}.content | inputs.messages[n].content | Content for the nth input message |

gen_ai.completion.{n}.role | outputs.messages[n].role | Role for the nth output message |

gen_ai.completion.{n}.content | outputs.messages[n].content | Content for the nth output message |

gen_ai.request.model | invocation_params.model | The model name used for the request |

gen_ai.response.model | invocation_params.model | The model name returned in the response |

gen_ai.request.temperature | invocation_params.temperature | Temperature setting |

gen_ai.request.top_p | invocation_params.top_p | Top-p sampling setting |

gen_ai.request.max_tokens | invocation_params.max_tokens | Maximum tokens setting |

gen_ai.request.frequency_penalty | invocation_params.frequency_penalty | Frequency penalty setting |

gen_ai.request.presence_penalty | invocation_params.presence_penalty | Presence penalty setting |

gen_ai.request.seed | invocation_params.seed | Random seed used for generation |

gen_ai.request.stop_sequences | invocation_params.stop | Sequences that stop generation |

gen_ai.request.top_k | invocation_params.top_k | Top-k sampling parameter |

gen_ai.request.encoding_formats | invocation_params.encoding_formats | Output encoding formats |

gen_ai.usage.input_tokens | usage_metadata.input_tokens | Number of input tokens used |

gen_ai.usage.output_tokens | usage_metadata.output_tokens | Number of output tokens used |

gen_ai.usage.total_tokens | usage_metadata.total_tokens | Total number of tokens used |

gen_ai.usage.prompt_tokens | usage_metadata.input_tokens | Number of input tokens used (deprecated) |

gen_ai.usage.completion_tokens | usage_metadata.output_tokens | Number of output tokens used (deprecated) |

input.value | inputs | Full input value, can be string or JSON |

output.value | outputs | Full output value, can be string or JSON |

langsmith.metadata.{key} | metadata.{key} | Custom metadata |

使用 Traceloop SDK 记录追踪信息

Traceloop SDK 是一个兼容 OpenTelemetry 的软件开发工具包(SDK),支持多种模型、向量数据库及框架。 如果您希望对本 SDK 所涵盖的某些集成进行观测(instrumentation),则可以将该 SDK 与 OpenTelemetry 结合使用,将追踪数据(traces)记录至 LangSmith。

要了解 Traceloop SDK 支持哪些集成,请参阅 Traceloop SDK 文档。

入门请按以下步骤操作:

1. 安装

pip install traceloop-sdk

pip install openai

2. 配置你的环境

设置环境变量:

TRACELOOP_BASE_URL=https://api.smith.langchain.com/otel

TRACELOOP_HEADERS=x-api-key=<your_langsmith_api_key>

可选:指定一个不同于“default”的自定义项目名称

TRACELOOP_HEADERS=x-api-key=<your_langsmith_api_key>,Langsmith-Project=<langsmith_project_name>

3. 初始化SDK

要使用 SDK,您需要在记录追踪信息之前对其进行初始化:

from traceloop.sdk import Traceloop

Traceloop.init()

4. 记录追踪信息

以下是一个使用 OpenAI 聊天补全功能的完整示例:

import os

from openai import OpenAI

from traceloop.sdk import Traceloop

client = OpenAI(api_key=os.getenv("OPENAI_API_KEY"))

Traceloop.init()

completion = client.chat.completions.create(

model="gpt-4o-mini",

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{

"role": "user",

"content": "Write a haiku about recursion in programming."

}

]

)

print(completion.choices[0].message)

您应该能在 LangSmith 仪表板中看到一条类似此示例的追踪记录。

使用 Arize SDK 进行追踪

借助 Arize SDK 和 OpenTelemetry,您可以将来自多个其他框架的追踪数据记录到 LangSmith。 以下是如何将 CrewAI 的追踪数据发送至 LangSmith 的示例,您可在此处查看受支持框架的完整列表 此处。若要将此示例适配至其他框架,您只需将插装器(instrumentor)更换为对应框架的版本即可。

1. 安装

首先,安装所需的包:

pip install -qU arize-phoenix-otel openinference-instrumentation-crewai crewai crewai-tools

2. 配置你的环境

接下来,设置以下环境变量:

OPENAI_API_KEY=<your_openai_api_key>

SERPER_API_KEY=<your_serper_api_key>

3. 设置检测器

在运行任何应用程序代码之前,让我们先设置仪器化工具(您可以将其替换为任意受支持的框架此处)

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter

# Add LangSmith API Key for tracing

LANGSMITH_API_KEY = "YOUR_API_KEY"

# Set the endpoint for OTEL collection

ENDPOINT = "https://api.smith.langchain.com/otel/v1/traces"

# Select the project to trace to

LANGSMITH_PROJECT = "YOUR_PROJECT_NAME"

# Create the OTLP exporter

otlp_exporter = OTLPSpanExporter(

endpoint=ENDPOINT,

headers={"x-api-key": LANGSMITH_API_KEY, "Langsmith-Project": LANGSMITH_PROJECT}

)

# Set up the trace provider

provider = TracerProvider()

processor = BatchSpanProcessor(otlp_exporter)

provider.add_span_processor(processor)

# Now instrument CrewAI

from openinference.instrumentation.crewai import CrewAIInstrumentor

CrewAIInstrumentor().instrument(tracer_provider=provider)

4. 记录追踪信息

现在,您可以运行 CrewAI 工作流,其追踪信息将自动记录到 LangSmith 中。

from crewai import Agent, Task, Crew, Process

from crewai_tools import SerperDevTool

search_tool = SerperDevTool()

# Define your agents with roles and goals

researcher = Agent(

role='Senior Research Analyst',

goal='Uncover cutting-edge developments in AI and data science',

backstory="""You work at a leading tech think tank.

Your expertise lies in identifying emerging trends.

You have a knack for dissecting complex data and presenting actionable insights.""",

verbose=True,

allow_delegation=False,

# You can pass an optional llm attribute specifying what model you wanna use.

# llm=ChatOpenAI(model_name="gpt-3.5", temperature=0.7),

tools=[search_tool]

)

writer = Agent(

role='Tech Content Strategist',

goal='Craft compelling content on tech advancements',

backstory="""You are a renowned Content Strategist, known for your insightful and engaging articles.

You transform complex concepts into compelling narratives.""",

verbose=True,

allow_delegation=True

)

# Create tasks for your agents

task1 = Task(

description="""Conduct a comprehensive analysis of the latest advancements in AI in 2024.

Identify key trends, breakthrough technologies, and potential industry impacts.""",

expected_output="Full analysis report in bullet points",

agent=researcher

)

task2 = Task(

description="""Using the insights provided, develop an engaging blog

post that highlights the most significant AI advancements.

Your post should be informative yet accessible, catering to a tech-savvy audience.

Make it sound cool, avoid complex words so it doesn't sound like AI.""",

expected_output="Full blog post of at least 4 paragraphs",

agent=writer

)

# Instantiate your crew with a sequential process

crew = Crew(

agents=[researcher, writer],

tasks=[task1, task2],

verbose= False,

process = Process.sequential

)

# Get your crew to work!

result = crew.kickoff()

print("######################")

print(result)

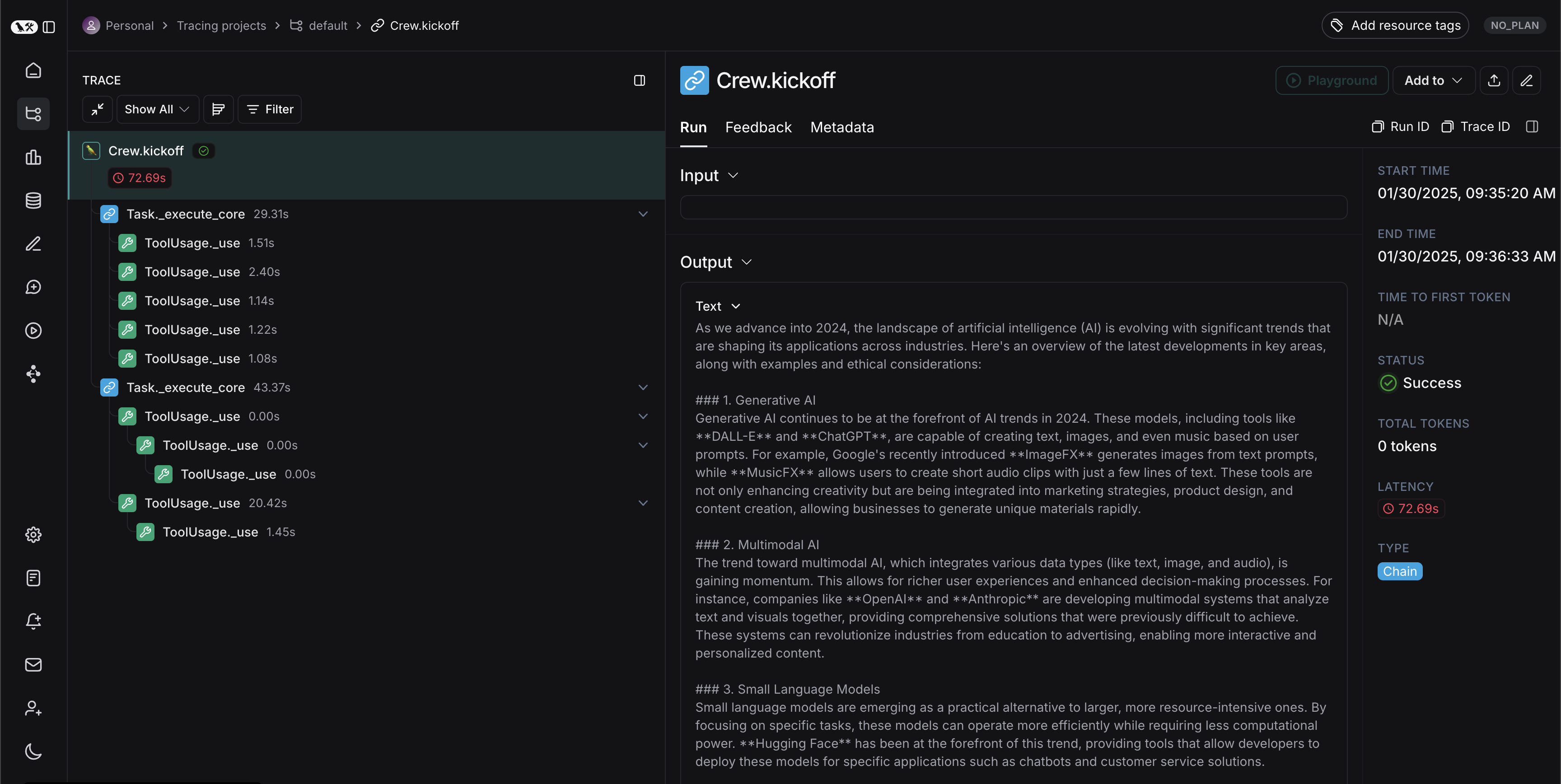

你应在 LangSmith 项目中看到一条类似如下的追踪记录: